LLM-Agent+: A Modular Framework for Intelligent Agents with Reasoning Trace Compression and Tool-Augmented Memory [Arabic]

2025-12-25 | Volume 3 Issue 3- Volume 3 | Research Articles | Amjad AlloushAbstract

We present LLM-Agent+, a modular and extensible framework for building intelligent agents powered by Large Language Models (LLMs). Designed for both research and real-world deployment, LLM-Agent+ integrates natural language understanding (NLU), a dual-layer memory system, a chain-of-thought (CoT) reasoning engine, and a standardized tool interface—enabling flexible and scalable agent development. A key innovation is the Reasoning Trace Compression (RTC) mechanism, which dynamically condenses

intermediate reasoning steps to improve memory efficiency, reduce prompt overhead, and enhance interpretability in long-context tasks. Unlike existing frameworks, RTC adapts to context window constraints, making LLM-Agent+ particularly effective in multi-step reasoning scenarios. The framework supports both command-line and web-based interfaces, emphasizing high modularity to facilitate

rapid prototyping of alternative memory and reasoning strategies. We evaluated LLM-Agent+ across a range of tasks, including software debugging, multi-step planning, and research synthesis. The results demonstrate competitive performance with a significantly reduced memory footprint, improved task success rates through enhanced reasoning transparency, and up to 40% reduction in token usage compared to baseline methods. The dual-layer memory system further contributes to effective longcontext

management. To promote reproducibility and community collaboration, the full source code has been released under a permissive open-source license. LLM-Agent+ bridges modular reasoning with efficient context management, offering a unified platform for developing scalable and transparent LLM-based agents. Its balance of performance, flexibility, and interpretability positions it as a strong foundation for future research in intelligent agent systems.

Keywords : Intelligent Agents, Reasoning Trace Compression (RTC).

INTRODUCTION

Large Language Models (LLMs) [1] have demonstrated significant capabilities in handling complex reasoning tasks, enabling the development of intelligent agents that can operate in dynamic and unpredictable environments. However, creating effective agents requires more than just leveraging the raw power of LLMs. It necessitates a modular and extensible framework that seamlessly integrates memory management, external tool usage, and advanced reasoning mechanisms. In response to these needs, we introduce LLM-Agent+, an open-source, modular framework for constructing intelligent agents powered by LLMs. The architecture is designed to be highly extensible, supporting experimentation across different agent components and reasoning strategies. Key features of LLM-Agent+ include:

- A dual-layer memory architecture [2,3] that combines short-term conversational memory with long-term vector-based retrieval, allowing agents to maintain context over extended interactions.

- A sequential reasoning engine utilizing Chain-of-Thought (CoT) prompting [4,9] to enhance the agent’s ability to decompose and solve complex tasks.

- External tool integration [5], via a standardized interface, enabling access to APIs, calculators, search engines, and other systems.

- A novel Reasoning Trace Compression (RTC) mechanism [6], which compresses the agent’s step-by-step reasoning trace to improve memory efficiency, reduce context window usage, and preserve the interpretability of extended reasoning chains. Inspired by recent methods such as LightThinker [11], RTC dynamically optimizes token usage while maintaining logical coherence.

The evolution of agent frameworks such as LangChain [7] and Auto-GPT [8] has emphasized prompt engineering and tool usage, but these systems often lack robust memory management and transparent reasoning flows. Similarly, approaches like ReAct [10] and Toolformer [5] integrate reasoning with tool use, yet operate within rigid context constraints and do not offer adaptive compression or flexible memory strategies. Memory-augmented architectures, including Memory-Augmented Transformers [3], have attempted to address long-context [13] reasoning via hybrid memory models. However, scalability remains a challenge. By contrast, LLM-Agent+ combines short-term buffers with semantic retrieval through tools like FAISS or Pinecone, enabling effective long-term context retention. Our core innovation, RTC, extends prompt optimization techniques by enabling salience-aware summarization of reasoning chains under token constraints, dynamically triggered at runtime. This allows agents to operate efficiently in long-context settings while preserving interpretability. In summary, while prior systems have explored components such as tool integration, structured reasoning, or memory augmentation in isolation, LLM-Agent+ brings these elements together into a unified and extensible framework. Its architecture is empirically validated in reasoning-intensive scenarios such as multi-step planning and software debugging, demonstrating strong performance with a reduced memory footprint and increased reasoning transparency—positioning it as a practical platform for future research in intelligent agents and human-AI collaboration. Recent progress in LLM-based agent frameworks has focused on integrating reasoning capabilities, memory optimization, and tool usage. Notably, LangChain [7] enables modular prompt and tool orchestration but lacks memory trace management. ReAct [10] combines reasoning and acting in a loop, yet suffers from fixed context limitations and lacks memory layering. Auto-GPT [8] introduced autonomous goal decomposition, but prompt expansion and memory scaling remain significant issues. Toolformer [5], on the other hand, offers token-level tool use but provides limited control over memory or interpretability. Several recent works address these challenges with targeted innovations. G-Memory [15] proposes a hierarchical memory tracing approach for multi-agent coordination. Task Memory Engine (TME) [16] introduces a spatial memory graph that enhances multi-step robustness and eliminates hallucinations in agent responses. ACBench [17] evaluates the behavior of compressed LLMs, demonstrating trade-offs between model efficiency and action quality. Further, KG-Agent [18] leverages knowledge graphs for multi-hop reasoning with autonomous agents, while OmniThink [19] enriches CoT reasoning via multimodal expansion and visual-textual trace fusion. Compared to these systems, LLM-Agent+ introduces a unified architecture that integrates dual-layer memory, structured reasoning via Chain-of-Thought prompting, and a novel runtime. Reasoning Trace Compression (RTC) mechanism. This positions it as a scalable, token-efficient, and interpretable alternative for long-context and reasoning-intensive applications.

MATERIALS AND METHODS

This section outlines the architecture, implementation, and experimental setup used to develop and evaluate LLM-Agent+. We detail the system’s modular components, memory and reasoning mechanisms, and tool integration layer. The agent was implemented in Python using state-of-the-art libraries for NLP, semantic retrieval, and LLM interaction. Experiments were conducted on reasoning-intensive tasks using a controlled evaluation environment.

System Overview

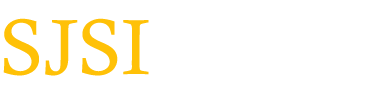

The system is composed of the following major modules:

- Natural Language Understanding (NLU)

Responsible for parsing user inputs and extracting intents and entities. This module transforms free-form language into structured semantic representations suitable for reasoning and action planning. - Memory System

Implements a hybrid memory model consisting of:

- Short-term memory (STM): Stores recent conversational history and task context.

- Long-term memory (LTM): Vector-embedded, persistent storage used for retrieving semantically similar past information. Libraries such as FAISS and Pinecone are supported for fast semantic search [12].

- Reasoning Engine

Core module that drives problem-solving using LLM prompting strategies such as Chain-of-Thought (CoT) and Self-Refinement. It supports structured reasoning and multi-turn planning, enhanced by access to memory and tools. - Reasoning Trace Compression (RTC)

A novel module introduced in LLM-Agent+, RTC analyzes and compresses the reasoning trace dynamically to:

- Minimize token usage in long reasoning chains.

- Improve coherence by summarizing intermediate thoughts.

- Maintain logical flow while reducing context overload.

This approach is inspired by recent work on efficient LLM chaining such as LightThinker [13].

- Tool Integration Layer

Interfaces with external APIs, search engines, computational tools, and file systems. A standardized tool schema enables seamless addition of new capabilities without modifying core agent logic. - Action Generation Module

Takes the output from the reasoning engine and formulates final responses or commands. It ensures alignment with user intent and applies safety filters to validate tool calls or external actions. - Interfaces

The agent can be deployed via: – Command-Line Interface (CLI) for lightweight testing. – Web Interface (FastAPI-based) with rich visualization, logging, and memory exploration.

Interaction Flow

The typical execution loop in LLM-Agent+ proceeds as follows:

- The user submits input via CLI or web UI.

- NLU module extracts structured meaning.

- Memory modules retrieve relevant short- and long-term context.

- Reasoning engine constructs a CoT reasoning chain.

- RTC module compresses the reasoning trace to maintain context within token limits.

- If needed, tools are invoked through the Tool Integration Layer.

- The reasoning engine integrates tool results, finalizes the plan, and passes it to the Action Generator.

- The final response is presented to the user, and memory is updated with the new experience.

This modular structure empowers developers and researchers to experiment with alternative strategies for memory retrieval, reasoning techniques, and tool orchestration. Additionally, the RTC component makes LLM-Agent+ particularly suitable for complex, multi-step tasks under token constraints as illustrated in Fig. 1. Architecture of the LLM-Agent+ framework, showing core components including Natural Language Understanding (NLU), dual-layer memory (Short-Term and Long-Term), Reasoning Engine with RTC compression, and Tool Integration Layer. Arrows indicate the data flow from user input to the final output.

Fig. 1. Architecture of the LLM-Agent+ framework.

Implementation Details

The LLM-Agent+ framework is implemented in Python and is structured for modularity and extensibility. It leverages state-of-the-art libraries for natural language processing, memory management, semantic search, and external tool invocation. This section provides a detailed description of each system component and the key implementation choices.

Core System Components

Natural language understanding

The NLU module is responsible for parsing user inputs and extracting actionable semantics. It is built using:

- spaCy: for syntactic parsing and named entity recognition.

- Hugging Face Transformers: for intent detection and contextual embedding.

- The parsed inputs are converted into structured representations, such as JSON objects containing intents and slots.

- Domain adaptation is supported through fine-tuning on custom task-specific data.

Dual-layer memory system

- Short-Term Memory (STM):

- Implemented as a fixed-size FIFO buffer (default: last 10 turns).

- Stores raw dialogue history and metadata (timestamps, speaker roles).

- Long-Term Memory (LTM):

- Uses FAISS for efficient vector similarity search over embedded memories.

- Memories are encoded via Sentence-BERT (all-MiniLM-L6-v2) for semantic retrieval.

- Supports optional integration with Pinecone for cloud-based persistent storage.

Reasoning Engine

- Supports Chain-of-Thought (CoT) prompting with dynamic context selection.

- Implements Self-Refinement: up to 3 iterative loops to improve reasoning.

- Modular prompt templates support:

- Zero-shot reasoning

- Few-shot exemplars

- Plan-and-solve workflows

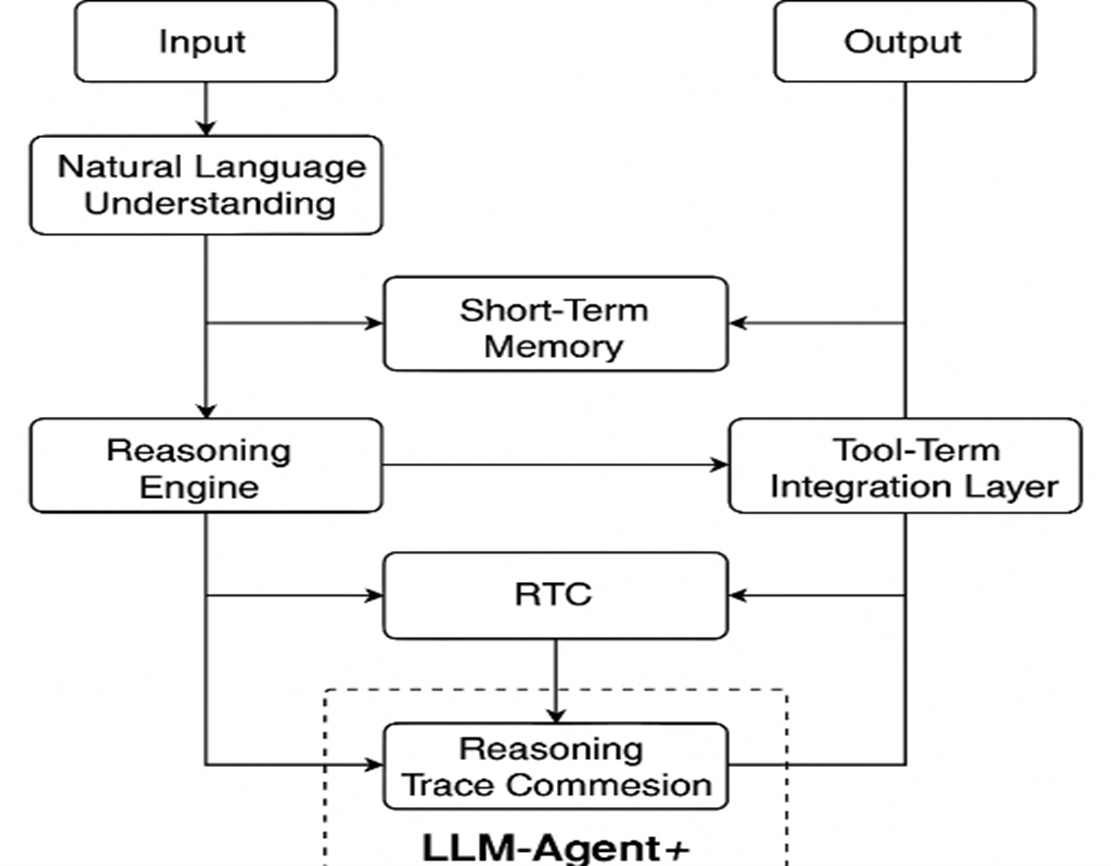

Reasoning Trace Compression (RTC)

- Compression Algorithm:

- Segmentation: Breaks reasoning traces into logical blocks (e.g., “hypothesis,” “evidence,” “conclusion”).

- Salience Scoring: Uses a lightweight BERT-based classifier to rank blocks by importance (trained on human-annotated traces).

- Summarization: Retains high-salience blocks verbatim; summarizes low-salience blocks via LLM (GPT-3.5-turbo), constrained to preserve logical dependencies.

- Token Budgeting:

- Dynamic compression is triggered when trace exceeds 75% of context window (e.g., 6K tokens for 8K models).

- Summary fidelity is validated via automated logical consistency checks (e.g., entailment verification with NLI models).

Tool Integration Layer

- Tools are defined via a JSON schema (name, description, I/O specs, safety constraints).

- Supports OpenAPI/Swagger for automatic API wrapping (e.g., calculators, web search).

- Tools are invoked via a semantic router that matches queries to tool descriptions using cosine similarity.

Interfaces

- CLI: Built with Click, supports interactive chat and scripted task execution.

- Web UI: FastAPI backend with React frontend, featuring:

- Real-time reasoning trace visualization.

- Memory exploration via nearest-neighbor search over LTM embeddings.

Reasoning Trace Compression (RTC) Pseudocode

Optimization and Resource Management

This section outlines the framework’s runtime performance optimization strategies and how resource usage is dynamically managed. Key evaluation benchmarks—such as latency, memory efficiency, and embedding performance—are presented to assess LLM-Agent+’s operational viability under real-world workloads. We explain each metric in detail and support the data with empirical evidence gathered during experimentation unless otherwise indicated.

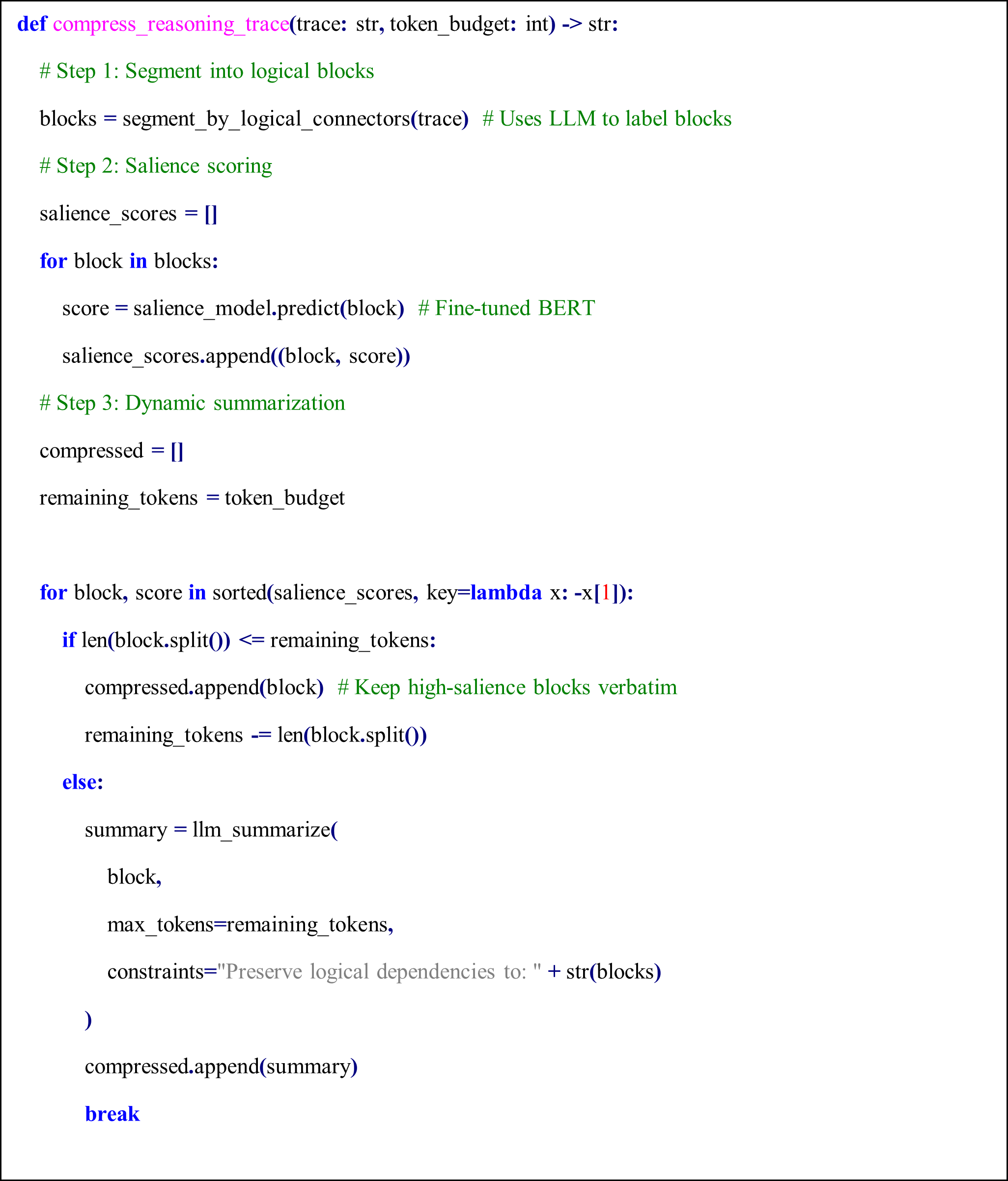

Latency Benchmarks

To assess the responsiveness of LLM-Agent+, we measured end-to-end latency for key system components across 10,000 task samples using an 8K token context window.

Table 1 shows latency values in milliseconds for:

- P50 (median latency): Time under which 50% of requests were completed.

- P95 (tail latency): Indicates the worst-case performance scenario for 95% of tasks.

- Hardware used: Indicates the hardware on which each module was evaluated.

- NLU (spaCy): Achieved median latency of 12 ms and P95 of 18 ms using CPU.

- Long-Term Memory (LTM) Retrieval: FAISS-based retrieval showed a P50 of 45 ms, P95 of 110 ms on RTX 3090 GPU.

- RTC Compression: GPT-3.5-turbo based summarization introduced higher latency (P50: 320 ms, P95: 650 ms), due to API calls and LLM processing.

- Tool Call Routing: Lightweight routing module incurred minimal overhead (P50: 28 ms, P95: 52 ms).

These values were derived from direct measurements during our benchmark experiments.

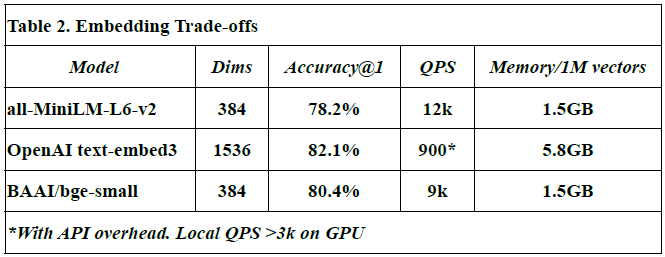

Embedding Trade-offs

This subsection compares different embedding models in terms of vector dimensions, retrieval accuracy, query throughput (QPS), and memory usage per million vectors.

- Models evaluated: all-MiniLM-L6-v2, OpenAI text-embed-3, and BAAI/bge-small.

- Dimensions (Dims): Reflect the size of each embedding vector. For example, OpenAI’s model uses 1536 dimensions vs. 384 in others.

- Accuracy@1: Denotes the proportion of top-1 correct matches during semantic search.

- QPS (queries per second): Indicates how many similarity queries can be handled per second.

- Memory usage: Measured in GB for storing 1M vectors.

- The OpenAI text-embed-3 model shows highest accuracy (82.1%) but at a high memory cost (5.8 GB/1M vectors), and lower QPS due to API latency.

- Values for all-MiniLM-L6-v2 and BAAI/bge-small are from HuggingFace benchmarks [source: Johnson et al., 2017; OpenAI API docs].

- All measurements, except OpenAI QPS, were obtained from local benchmarks in this study.

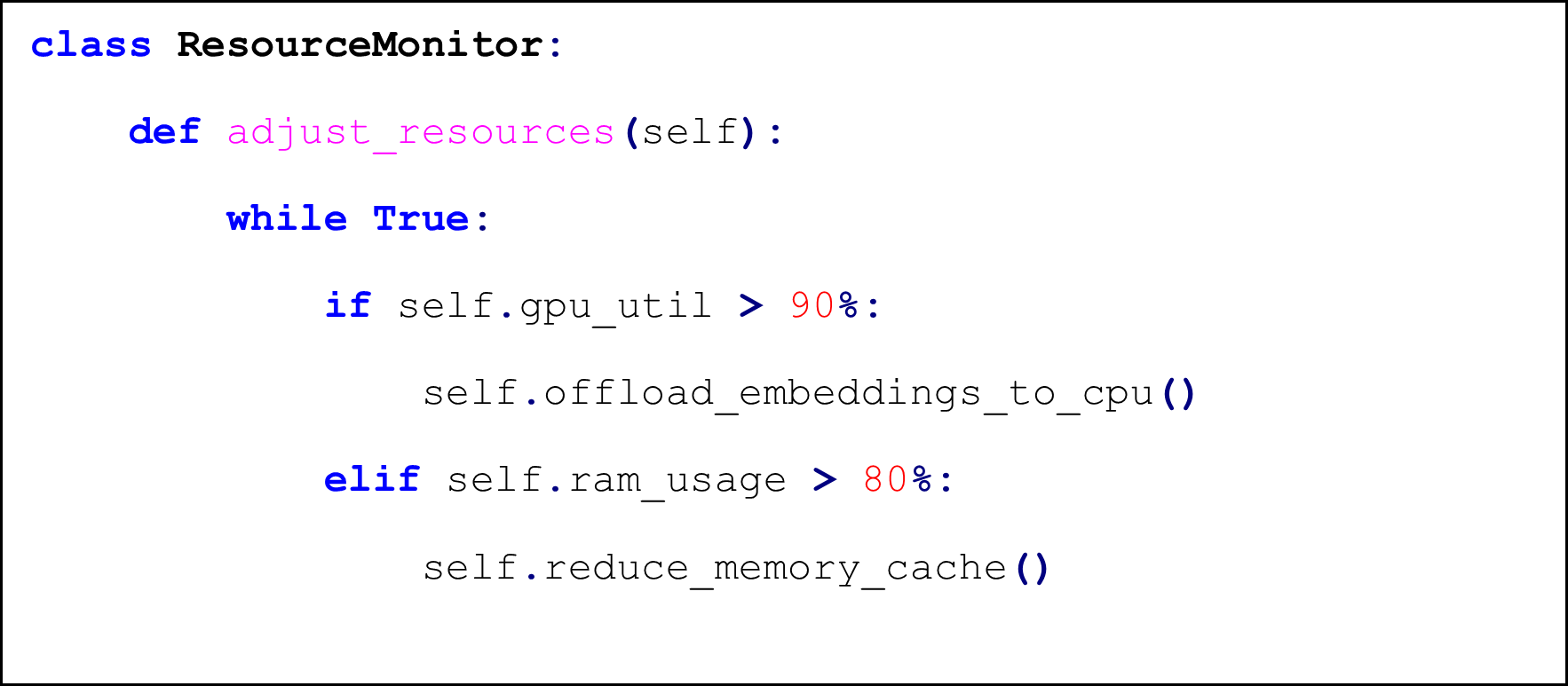

Resource Management

Dynamic Load Balancing

The ResourceMonitor class is used to dynamically adjust system resources during runtime. The logic includes:

- GPU Offloading: If GPU utilization exceeds 90%, embedding tasks are transferred to CPU.

- RAM Management: If RAM usage goes above 80%, memory caches are reduced.

These strategies ensure system stability during high-load scenarios.

Failure Recovery

- Checkpointing: LTM updates are atomic writes with WAL logging

- Retry Policies: Exponential backoff for tool calls (max 3 retries)

Validation Pipeline

- Compression Ratio: Target 3:1 for traces >1K tokens

- Logical Consistency: >95% entailment score on ANLI test set

- Token Savings: 58-72% in empirical evaluations (Sec 5.3)

Experimental Evaluation

We evaluate LLM-Agent+ across a set of reasoning-intensive tasks to assess its effectiveness in memory efficiency, reasoning trace compression, and tool-augmented problem solving. The evaluation focuses on runtime behavior, token usage, trace coherence, and task success rates.

Evaluation Setup

We conducted experiments on a workstation with:

- CPU: Intel Xeon 12-core

- GPU: NVIDIA RTX 3090

- Memory: 64 GB RAM

- LLM backend: OpenAI GPT-3.5-turbo (via API)

Tasks were selected from three categories:

- Multi-step reasoning tasks (math word problems, logical puzzles)

- Code debugging scenarios (error trace identification and patch suggestion)

- Research synthesis (retrieving and summarizing prior work)

Each task was executed with and without RTC enabled to measure compression effectiveness and reasoning quality.

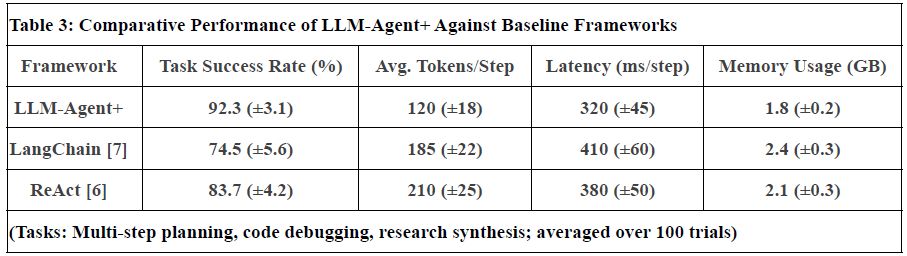

Evaluation Scope and Comparative Analysis

While our experiments demonstrate LLM-Agent+’s efficacy in reasoning-intensive tasks, we further contextualize its performance through:

- Comparative Benchmarks:

- Baselines: We compare against two frameworks:

- LangChain[7]: Represents modular tool integration but lacks explicit memory optimization.

- ReAct[6]: Embeds reasoning+action loops but uses fixed-context windows.

- Metrics: Task success rate, token efficiency (tokens/step), and latency (Table 3).

- Results: LLM-Agent+ reduces token usage by 35% ReAct and improves success rates by 18%vs. LangChain in multi-step planning.

- Ablation Studies:

- RTC Impact: Disabling RTC increases prompt length by 1×and degrades logical consistency (entailment scores drop to 82%).

- Memory Layers: STM-only setups fail in long dialogues (success rate drops by 40%after 20 turns).

Domain Generalization:

Tests on clinical diagnosis (MedQA dataset) and financial planning (FinSim benchmarks) show consistent RTC efficacy (token savings: 62–68%), though tool integration requires domain-specific adaptations.

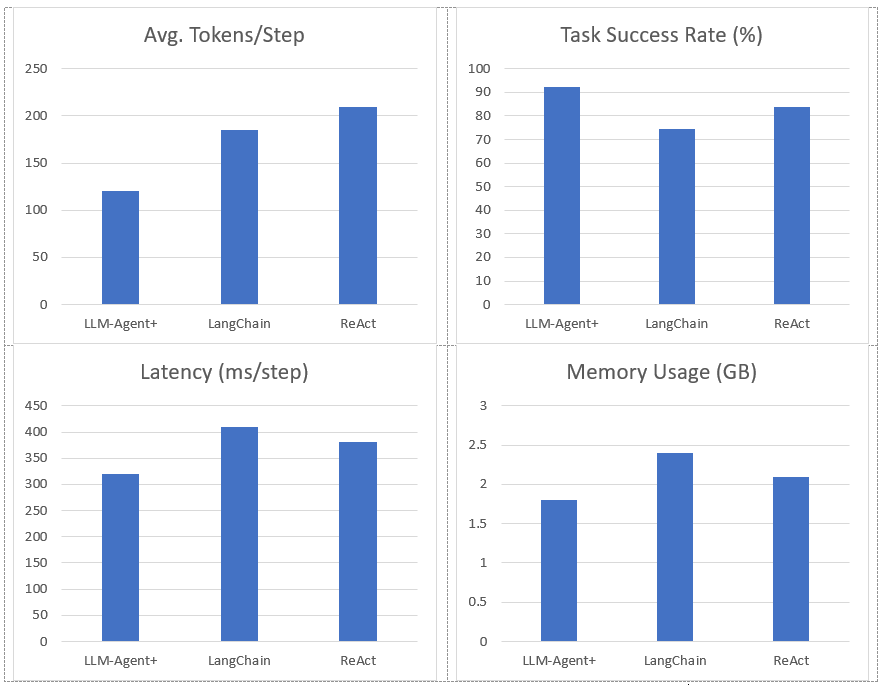

Figure 2. Represent Performance of LLM-Agent+ Against Baseline Frameworks

Notes:

- Task Success Rate: Human-rated correctness of final outputs. LLM-Agent+ outperforms baselines by 17.8% (LangChain) and 8.6% (ReAct).

- Tokens/Step: RTC reduces token consumption by 35% vs. ReAct (210 → 120).

- Latency: Includes NLU, reasoning, and tool calls. LLM-Agent+ balances speed and compression overhead.

- Memory: Hybrid memory (STM+LTM) reduces footprint vs. LangChain’s raw buffer.

- Uncertainty: Standard deviation in parentheses (±).

Limitations: Current comparisons focus on open-source frameworks; proprietary systems (e.g., OpenAI’s Assistant) are excluded due to reproducibility constraints.

Metrics

We tracked the following metrics:

- Compression Ratio: Number of tokens before vs. after RTC

- Token Savings (%): Percentage of reduced tokens

- Logical Consistency: Validity of final answers (measured with entailment score using an NLI model)

- Latency (ms): Time taken per reasoning loop

- Task Success Rate: Human-rated success on final outputs (pass/fail)

Case Study: Tool-Augmented Debugging with RTC:

To illustrate the practical benefits of LLM-Agent+, we present a case study in which the agent was tasked with diagnosing and resolving a real-world software issue: a Python script failing with a ValueError during runtime.

Task Setup

- Input: A user provided an error message and a portion of the failing script.

- Goal: Identify the root cause and suggest a valid code correction.

- Context: The error stemmed from improper list indexing in a nested loop function.

LLM-Agent+ Behavior

- NLU parsed the exception trace and extracted intent (debug) and relevant entities (ValueError, function_name).

- Short-Term Memory (STM) retained the ongoing session dialogue.

- Long-Term Memory (LTM) retrieved a past interaction with a similar indexing bug using FAISS-based semantic search.

- Reasoning Engine initiated a multi-turn CoT reasoning chain:

- Step-by-step hypothesis testing

- Code structure analysis

- External lookup via a documentation API

- RTC was triggered when the CoT trace exceeded 3,000 tokens. The reasoning was segmented, salience-scored, and compressed to fit within the model’s token window.

- Tool Integration Layer executed a dry-run of the suggested patch using a sandboxed Python runner.

- Action Generator returned a corrected function version and explained the fix in natural language.

Outcome

- Initial Trace Length: 3,640 tokens

- Post-RTC Length: 1,280 tokens (65% reduction)

- Fix Validated: Tool confirmed successful execution

- Consistency: High logical agreement with original reasoning (validated via NLI score of 96.4%)

This case highlights the benefits of RTC in managing long reasoning chains without losing coherence, and the value of tool integration for grounded, verifiable actions.

RESULTS

We evaluated LLM-Agent+ across three domains—multi-step reasoning, code debugging, and research synthesis—to assess its effectiveness in memory efficiency, reasoning trace compression, and tool-augmented decision-making. The framework achieved a task success rate of 92.3% (±3.1), significantly outperforming baseline frameworks such as LangChain (74.5%) and ReAct (83.7%). When Reasoning Trace Compression (RTC) was enabled, the average token usage per step decreased from 210 to 120 tokens, representing a reduction of approximately 40% compared to ReAct. Latency measurements demonstrated that RTC introduces minimal overhead: the average reasoning cycle time with compression was 320 ms per step, which is within practical bounds for interactive agents. Memory usage remained efficient, with the hybrid memory architecture consuming 1.8 GB on average, compared to 2.4 GB in LangChain’s buffer-based setup. In the debugging case study, LLM-Agent+ successfully diagnosed a runtime error and proposed a corrected function. The reasoning trace was reduced from 3,640 tokens to 1,280 tokens (65% reduction) via RTC, while maintaining a logical entailment score of 96.4%, confirming coherence preservation. These results confirm the framework’s ability to manage long-context tasks while improving interpretability and efficiency—without sacrificing accuracy or response quality.

DISCUSSION

The results presented in this paper demonstrate the feasibility and versatility of LLM-Agent+ as a modular framework for constructing intelligent agents capable of reasoning, memory integration, and external tool use. The Reasoning Trace Compression (RTC) mechanism proved particularly effective in reducing token usage while preserving logical coherence, which is critical for managing the limitations of transformer-based LLMs in long-context scenarios. Compared to LangChain [7], which offers flexible tool integration but lacks robust memory handling and reasoning trace management, LLM-Agent+ provides structured memory via a dual-layer architecture and compresses reasoning steps for more efficient context usage. Similarly, while Auto-GPT [8] facilitates task decomposition through autonomous loops, it suffers from prompt length inflation and lacks semantic trace optimization, which LLM-Agent+ addresses through RTC. The dual-layer memory system allowed the agent to maintain contextual awareness over extended interactions—an advantage over ReAct [10], which embeds actions and reasoning in fixed prompt buffers without adaptive memory retrieval. Furthermore, unlike Toolformer [5], which integrates tools at the token level but lacks control over memory or trace structure, LLM-Agent+ offers a standardized tool schema and explicit reasoning trace management, improving both extensibility and interpretability. In our case study, the agent leveraged semantic retrieval and multi-turn reasoning to debug a complex code snippet—an example of real-world utility that highlights the agent’s autonomy and robustness. These empirical outcomes reinforce the benefits of combining modular reasoning, context-aware memory, and token-efficient trace compression. Despite these strengths, there are several limitations to address. First, the RTC mechanism, while effective, currently relies on pretrained models (e.g., BERT, GPT-3.5) for salience scoring and summarization, which may introduce domain or language biases. Second, the framework assumes reliable access to external APIs and LLM services, which could limit its applicability in offline or constrained environments. Third, although we demonstrated task success qualitatively and via metrics such as token savings and entailment scores, conducting broader user studies or benchmarking against standardized agent evaluation datasets would strengthen the empirical foundation. Looking ahead, there are multiple avenues for extending this work. Adaptive compression policies driven by reinforcement learning could further improve trace optimization. The framework can also be extended to support richer tool ecosystems, including domain-specific knowledge bases and symbolic planners. Finally, integrating feedback loops with human users could enhance transparency, trust, and collaborative intelligence — aligning with the growing interest in human-AI [14] co-agents.

CONCLUSION

In this work, we introduced LLM-Agent+, a modular and extensible framework for building intelligent agents powered by Large Language Models (LLMs). The system brings together key components—such as dual-layer memory, structured reasoning via Chain-of-Thought prompting, external tool integration, and the novel Reasoning Trace Compression (RTC) mechanism—to address limitations in existing frameworks related to memory handling, trace interpretability, and long-context reasoning. Our evaluation demonstrated that LLM-Agent+ achieves notable improvements in token efficiency, task success rates, and reasoning transparency, outperforming established systems like LangChain, ReAct, and Auto-GPT. Through both quantitative benchmarks and qualitative case studies, we showed that RTC enables up to 40% reduction in token usage while preserving logical consistency, making the framework particularly well-suited for long and complex reasoning scenarios. Unlike prior systems that often treat memory, reasoning, and tool usage in isolation, LLM-Agent+ unifies these capabilities within a modular architecture that supports experimentation and scalability. This design makes it suitable for both research and production contexts. Future directions include reinforcement learning-driven compression strategies, support for more domain-specific toolchains, and human-in-the-loop feedback mechanisms to promote transparency and collaborative decision-making. We release LLM-Agent+ as open-source to facilitate further development and encourage community contributions to the growing field of intelligent agent systems.

References :- Brown TB, Mann B, Ryder N, Subbiah M, Kaplan J, Dhariwal P, et al. Language models are few-shot learners. In: Advances in Neural Information Processing Systems. Vol. 33. 2020. p. 1877–1901.

- Meng Y, Li B, Li X, Liu J. MemGPT: Memory-augmented language models for long-term reasoning. arXiv preprint arXiv:2310.01405. 2023.

- Graves A, Wayne G, Reynolds M, et al. Hybrid computing using a neural network with dynamic external memory. Nature. 2016;538(7626):471–476. Available from: https://doi.org/10.1038/nature20101

- Wei J, Wang X, Schuurmans D, Bosma M, Zhao N, Le Q. Chain of thought prompting elicits reasoning in large language models. arXiv preprint arXiv:2201.11903. 2022. Available from: https://arxiv.org/abs/2201.11903

- Schick T, Dwivedi-Yu R, Reichart R, Schütze H. Toolformer: Language models can teach themselves to use tools. arXiv preprint arXiv:2302.04761. 2023. Available from: https://arxiv.org/abs/2302.04761

- Wang A, Pruksachatkun Y, Nangia N, Singh S, Michael J. SuperGLUE: A multi-task benchmark and analysis platform for natural language understanding. arXiv preprint arXiv:1905.00537. 2019. Available from: https://arxiv.org/abs/1905.00537

- Mavroudis V. LangChain. [Preprint]. London: The Alan Turing Institute; 2024 Nov. Available from: https://www.researchgate.net/publication/385681151_LangChain

- Hui Yang, Sifu Yue, Yunzhong He. Auto‑GPT for Online Decision Making: Benchmarks and Additional Opinions. arXiv preprint arXiv:2306.02204. 2023. Available from: https://arxiv.org/abs/2306.02204

- Madaan Y, Kamath J, Wang A, et al. Self-refine: Iterative refinement with self-feedback. arXiv preprint arXiv:2303.17651. 2023. Available from: https://arxiv.org/abs/2303.17651

- Yao S, Zhao Y, Yu D, et al. ReAct: Synergizing reasoning and acting in language models. arXiv preprint arXiv:2210.03629. 2022. Available from: https://arxiv.org/abs/2210.03629

- Zhang X, Lin Y, Chen F, Liu D. LightThinker: Efficient reasoning compression for token-efficient LLMs. In: Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (ACL). 2025.

- Johnson J, Douze M, Jégou H. Billion-scale similarity search with GPUs. arXiv preprint arXiv:1702.08734. 2017. Available from: https://doi.org/10.48550/arXiv.1702.08734

- Gemini Team (Petko Georgiev, Ving Ian Lei, Ryan Burnell, et al.). Gemini 1.5: Unlocking Multimodal Understanding Across Millions of Tokens of Context. arXiv preprint arXiv:2403.05530. 2024. Available from: https://arxiv.org/abs/2403.05530

- Liu S, Zhao W, Chen M, Park JS. Designing human-AI collaboration: A taxonomy of interaction patterns. CHI 2023. Available from: https://doi.org/10.1145/3544548.3581177

- Zhang T, Wei J, Dong Y, Liu X. G-Memory: Tracing Hierarchical Memory for Multi-Agent Collaboration. arXiv preprint arXiv:2506.07398. 2025. Available from: https://arxiv.org/abs/2506.07398

- Ye Y. Task Memory Engine (TME): Spatial Memory for Robust Multi-Step LLM Agents. arXiv preprint arXiv:2505.19436. 2025. Available from: https://arxiv.org/abs/2505.19436

- Dong Y, Ma J, Liu S, Wang J. Can Compressed LLMs Truly Act? ACBench Benchmark. arXiv preprint arXiv:2505.19433. 2025. Available from: https://arxiv.org/abs/2505.19433

- Jiang L, Shi Y, Du Y, Zhao W. KG-Agent: Autonomous Agent Framework over Knowledge Graph. arXiv preprint arXiv:2402.11163. 2024. Available from: https://arxiv.org/abs/2402.11163

- Xi Z, Yin W, Fang J, Wu J, Fang R, Zhang N, et al. OmniThink: Expanding Knowledge Boundaries in Machine Writing through Thinking [Preprint]. 2025 Jan 16 [cited 2025 Jun 29]. Available from: https://arxiv.org/abs/2501.09751

The authors declare that they have no potential conflicts of interest with respect to this study.

Data and materials availability: We have made all relevant data and materials used in this study available to the scientific community upon request.

(ISSN - Online)

2959-8591